How do you create ethical AI?

This AI ethics guide is a start. Use it to teach kids about ethics in Artificial Intelligence.

AI is part of the fabric of our lives now, whether we like it or not. But rather than reducing technologies to broad categories of “good” or “bad,” we can thoroughly evaluate those technologies and our interactions with them. We need to understand the complex effects of AI technologies, and build fairer, more accurate tools. For the second season of Technovation Families, we created new units focused on ethics and AI for good to help address this need.

Families participating in Technovation Families use actual AI tools to create technological solutions to pressing community problems. To help kids and parents develop a nuanced understanding of AI effects, we also created a free guide about AI ethics, in partnership with Hogan Lovells, a law firm that advises on artificial intelligence issues. Teachers and parents can use this guide to teach kids about AI and our responsibilities when we create with it.

Guiding questions to create responsible, ethical AI for good

This set of guiding questions will help people use AI to make positive change in the world. Educators and parents can use this guide to lead conversations about AI including what AI is, where it’s used, how to assess new technologies for unintended negative impacts and what ethics are and why they’re important to consider when using AI.

12 questions to test whether an AI tool or product is ethical

We’ve collected 12 guiding questions you can use to critically evaluate AI tools and products – whether they’ve been built by students or by major tech companies. Here’s a peek at the first 3:

QUESTION 1

What decisions is the AI making? While some decisions (or classifications), such as whether a plant is a weed or not, may be harmless, others may have negative consequences, especially if the AI model takes certain actions as a result of those classifications. For instance, a model could be designed to guess an individuals’ gender or determine whether an individual has a disability or illness – and if that individual doesn’t have a chance to correctly classify themselves, that creates a potentially dangerous situation. AI creators need to consider the risk that their AI might mistakenly “misclassify” something.

QUESTION 2

After the AI makes a decision, what action does it take next? Answers to this question are important to understand the good, and bad, impacts of the technology you’re evaluating. It is easier to reduce the potential harms the product might create (for instance, by changing the decisions the product is making, or the actions it takes as a result of its decisions) before development has started, but addressing potential issues any time before a product is brought to market is better than not addressing them at all.

QUESTION 3

What would happen if the AI makes a mistake? Before a product idea enters development, it is important to think about what might happen if your model misclassifies a person, object, or other information. What are the potential worst-case scenarios if your product makes an error? It’s important to think about how errors might impact the purpose of your product or cause harm.

Use a decision tree to build fair AI

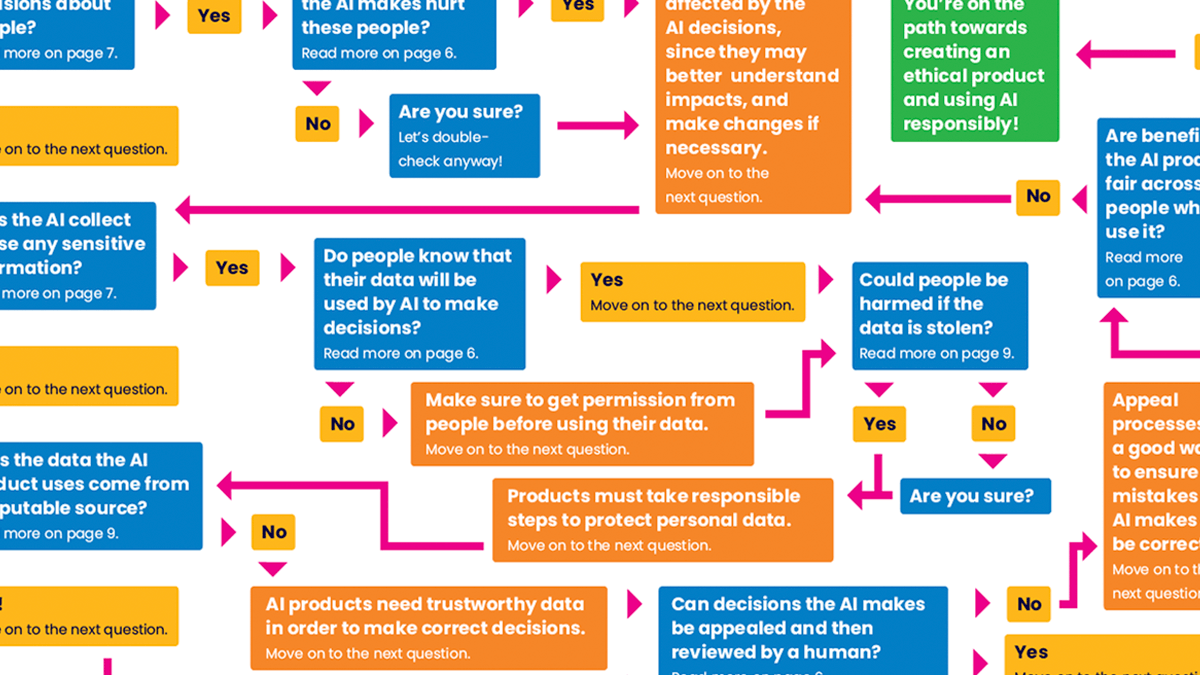

Ask these questions early in the ideation and development processes to create fairer AI. To help structure this stage of development, we’ve included a decision tree that walks you through the key questions an AI developer should ask themselves.

Going back to question 1 (“What decisions does this AI make?”) it’s especially important to center its potential effects on people. So we start by asking – does this AI make decisions about people?An example of this could be a tool that screens job applicants, or a tool that make recommendations for sentences in a courtroom.

If it does make decisions about people, the next big question is – do those decisions could hurt those people, and how. Another important question is looking at the other side of the equation – who benefits from the decisions the AI makes? Does this AI tool treat people fairly and equally?

The flowchart arranges big questions in a clear and useful way and can help kids (or learners of any age!) understand how complex ethical questions are, and how many questions we need to ask to understand the potential effects of technology tools and products. This handful of questions is just the beginning!

To see the full decision tree and access all 12 guiding questions, download the free AI ethics guide here.

Who is this guide for? We designed this guide for educators and parents who want to help their kids develop a more nuanced understanding of the technologies that shape our lives… but we think anyone looking for a tool to guide them through the process of evaluating technology for fairness, safety, and equality could find it useful.